Gemini Image to CAD Test

One of the areas that I have found LLMs lag significantly (at least at the start of 2026) is their utility for direct control of engineering software. The goal of this project was to get an initial assessment of the current state of LLM’s abilities to create parts in 3D parametric model software. Given the improving multimodality of the models I decided to specifically benchmark dimensioned drawings to STEP file creation.

I selected Fusion360 as the CAD environment of choice because it has a well documented python API- meaning LLMs likely have decent enough knowledge in their training data to be useful out of the box.

To complete this project I had to create several subcomponents:

Fusion360 Addin: this allows me to stream Fusion360 python API calls directly into the app

Benchmark Script: this runs the benchmark with the given drawings images and pipes the LLM outputs to Fusion

Autograder: after the benchmark is run I wanted an automated way of assessing the results.

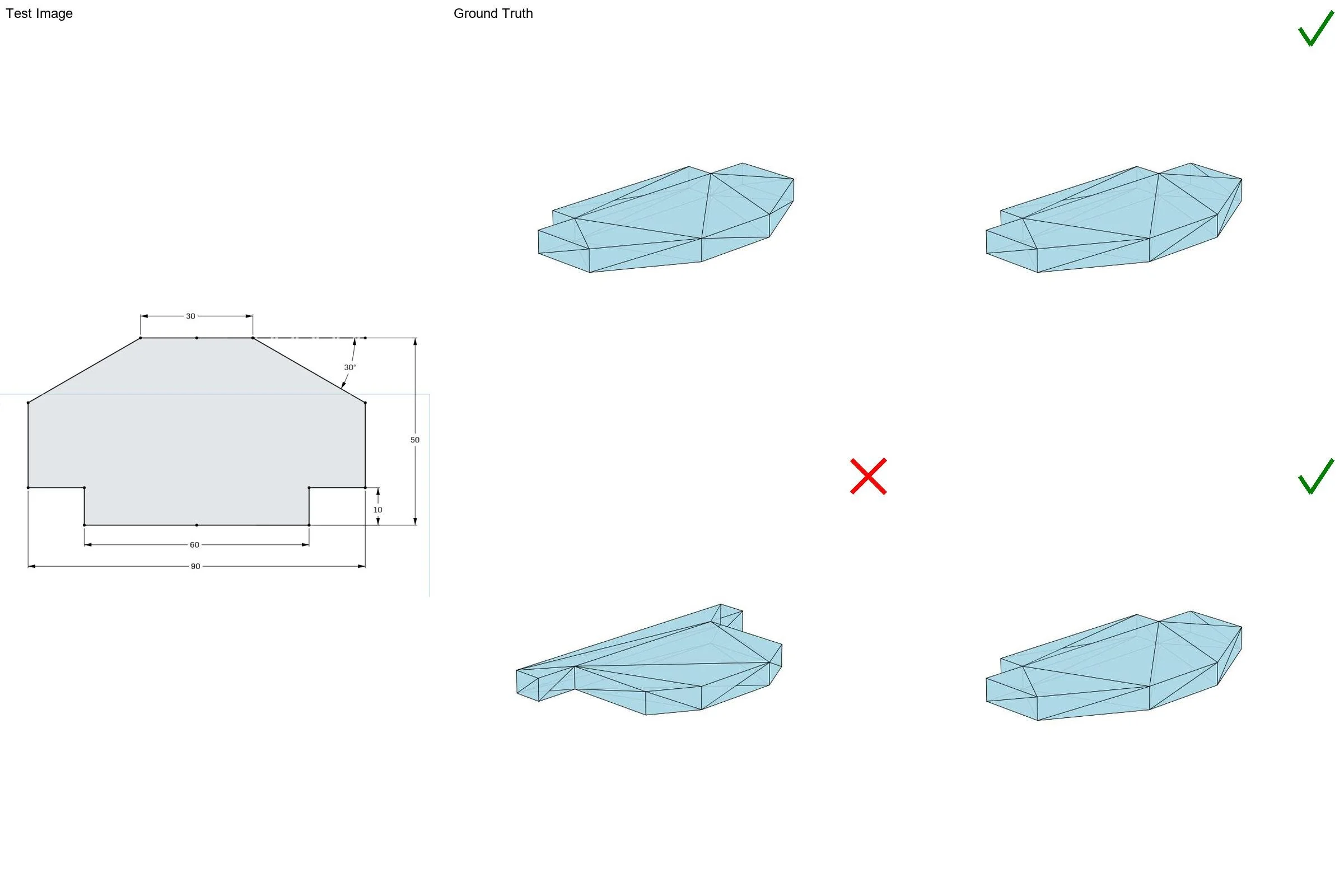

Autograder

The autograder uses CADQuery in python to compare the ground truth model I generated to each of 3 trial outputs per test image. It finds the outer bounding box of each shape and its volume. I gave a 1% tolerance on each relative to the ground truth for the pass criteria. I also have the script generated images of each and collage them together with the test drawing. An example is shown.

My initial testing with models from OpenAI, Google, and Anthropic yielded that most models are essentially unusable for this task. Gemini-2.5-pro was genuinely the only one worth testing. Additionally, none were about to take a 2D drawing of a 3D object and reproduce it with any amount of success. Thus, I decided to benchmark Gemini on 2D drawings of 2D shapes and have it extrude each result to a constant thickness of 10mm.

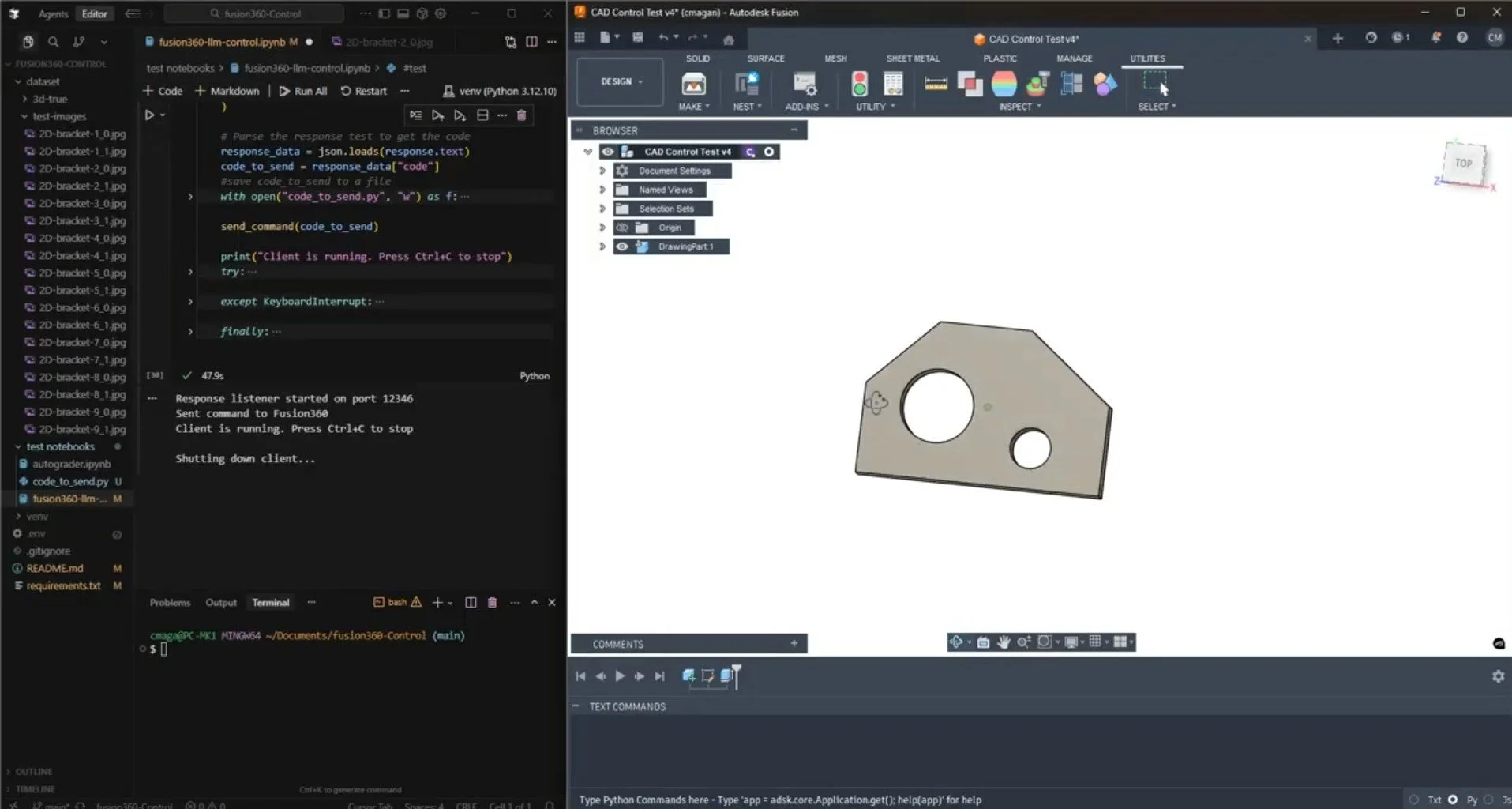

Fusion360 Addin

I did not want to constantly copy and paste between an LLM and the Fusion console, so I built a custom Fusion360 Addin that will let me stream in external code. (Note: executing unreviewed LLM python code continuously on your system is potentially dangerous for several obvious reasons). Essentially, the Addin opens a socket that attempts to execute the Fusion360 python API calls that are sent. Then it will return an OK message or the Fusion generated error from the code. Broadly, I may continue to build out this Addin which would let me (or an AI) write and use random macros for Fusion360.